Until now I have not worked too much on it, some weeks last year and some bug fixing here and there.

Now, with the last Unite demo done, I didn't have much else to do, so I decided to put all my efforts here.

From what I see on twitter, I put the first stone of Fog Volume 3 in October. I got really motivated and have not stopped yet. It's been 3 months working an average of 15h/day. Sometimes more than 30 hours without sleeping. Sleep 4 and work another 20. And #repeat til death#

But today I started to see the light at the end of the tunnel. My task list is getting thinner now. And some bigger tasks will be postponed for future versions.

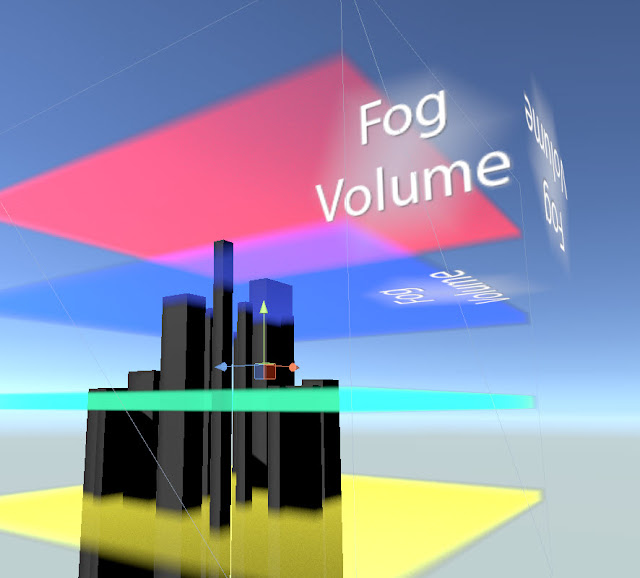

So, what can Fog Volume 3 do? Lets watch some short videos (sort by date):

A very important part of every piece of software is making it painless to the user. Fog Volume 3 is HUGE, frigging huge, so dealing with 3 vertical screens of weird parameters was not an option. For that reason, I wrote a modular interface:

Having said what's Fog Volume, lets talk about what is not.

Fog Volume is not:

- Time of the day system

- Weather system

- Atmosphere system

Transparency:

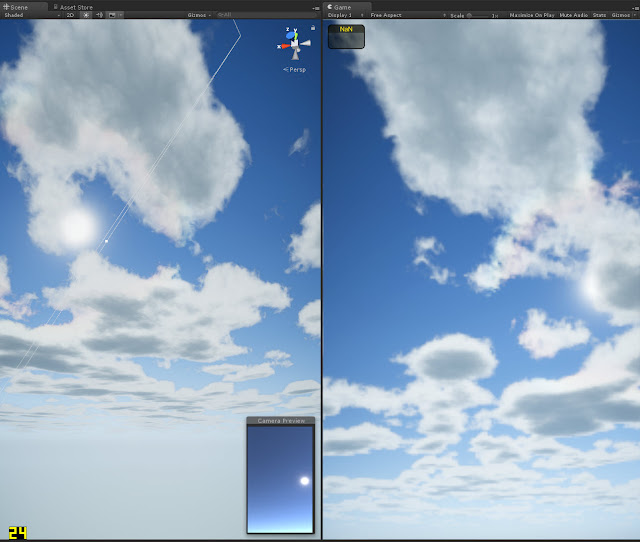

I bet nobody have solved the problem of blending transparent shaders with raymarch shaders. Fog Volume will suffer of the same. What can you do? You can have a Fog Volume in the background:So you can use it for high clouds, but don't ever put transparent objects inside the volume.

In case you don't like Unity's default fog and want a different method in your project, lets say realistic scattering, height fog or whatever... you will have to code a whole new shading core. I have done that several times, but never released any to the public because that's a deep modification that needs a coder in the project to integrate and maintain the system.

Performance:

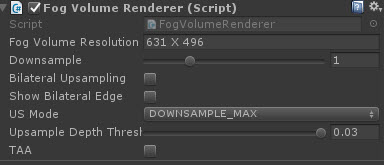

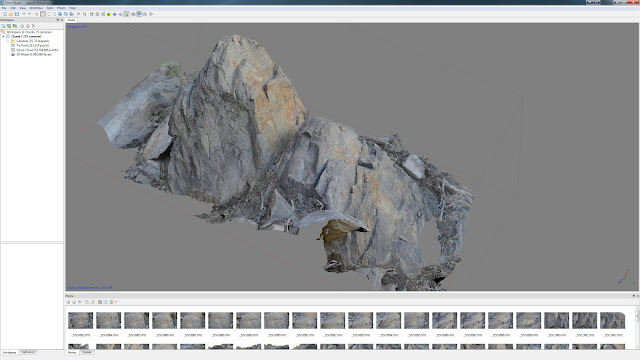

Raymarching is expensive by definition (almost). I tried to find where I was wasting gpu power in the older version. Regarding this, v3 can do more with less. Apart from that, having in mind that the noise input is a 3D texture of 128 pixels, we may think that rendering it dowscaled won't be a drama. And that's it, I included a module to render it with a secondary camera. I put a script in the main camera where we have access to this secondary camera properties. I would have placed it in the Fog Volume object, but this is a global process and common for n-Fog Volumes.In this script we have some parameters:

- Dowscale

- Upsampling options

- TAA made by Inside team and other important researchers.

VR:

VR is possible, but at what cost? Dunno yet. The initial release won't support rendering the effect down-sampled, but I will work on that aspect after the release. Rendering the effect full scale wont produce any issue in VR.Volumetric Shadows:

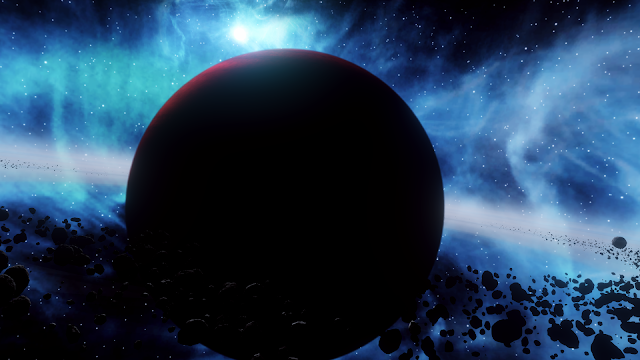

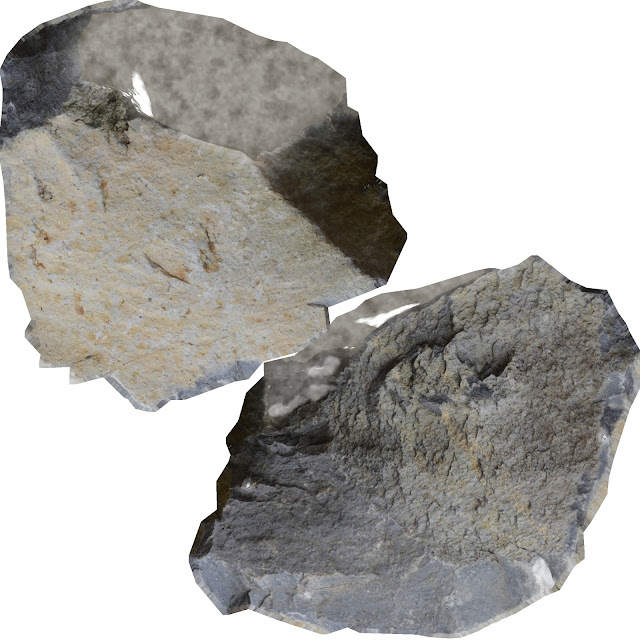

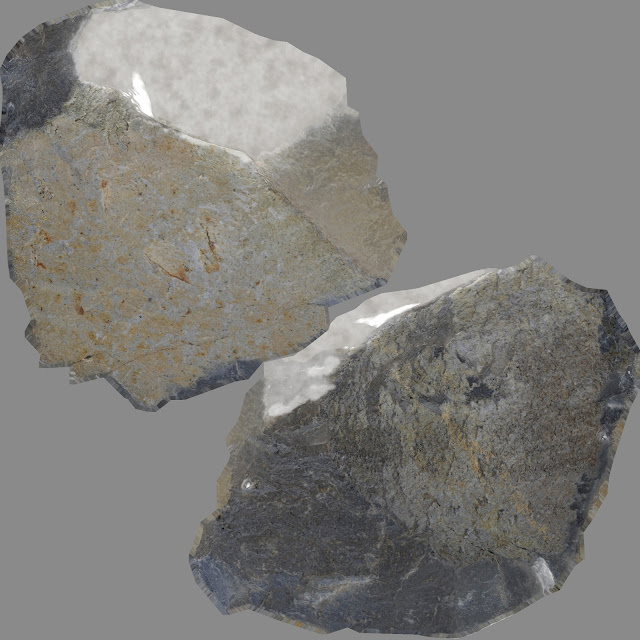

Shadow mapping is not done at the moment. What I do is to sample the opacity of a given object placed on top of the volume. So atm, I would use this feature to cast shadows from clouds, as shown in the picture below. Another case that comes to my mind to use this would be a corridor with windows.I sample this map along the volume after some down-sampling and blur.

For realism, I wanted to control the amount of blur based on the distance from the shadow caster to the hit point, but I will do that in the future.

Shadow direction will be correct if your relative rotationX between light and the volume don't exceed approx 30º. This is because I reuse the volume coordinates to do the trick. I would have to generate a new set of coordinates using the light matrix. This part is subject to evolve in the future, lets see where it ends.

You could have 360º volume shadows while the relative rotation between light and volume don't exceed those 30º. I added a checker to attach the light to the volume rotation (relative rotation = 0). This is how Robert Cupisz volume light works. Once activated, I turn off a keyworkd where the shadow direction was computed. I told you I tried to avoid wasting gpu power!.

Viewports:

Fog Volume will be rendered in both viewports. Note that Scene view will be super expensive compared to Game view when you have dowsampling activated.Intended platforms:

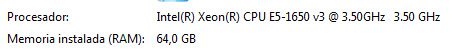

Mainly BIG machines although simple fog woks smoothly on mobile

Future plans:

- Full volumetric lighting

- Presets system

- TOD && Sky scattering? Only if the mass ask for it

- VR

- Noise texture generator

- Subtractive sphere distance fields

- Interleaved sampling

- Modify TAA for HDR output

- More debug view modes

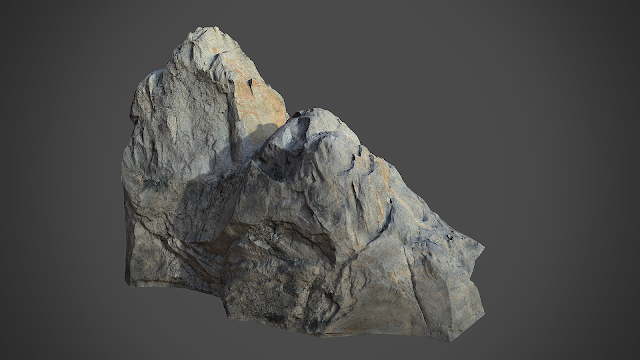

- Scene blur. Thanks Lee Perry-Smith for the ideas and refs